A couple of years back, I wrote about IR Drop Analysis in one of my earlier posts. Fortunately. I got to work on IR Drop Analysis more extensively over past couple of months, and I thought I'll share my perspective gained from the work in form of a new post!

Static IR drop can highlight power grid weakness in the design. Static IR drop violations spread all across the design point to the fact that the power grid needs to be re-designed to reduce the overall power grid resistance. There may be cases where static IR drop violations may be concentrated around the regions with inherent power grid weaknesses- like the regions with one-sided power delivery- around the floorplan boundary, around the macros, within the macro channels.

During

static timing analysis, the voltage (Vdd) at all the devices is assumed to be a

constant. Similarly, the ground pin (Vss) is assumed to be held at a constant 0

V. In reality, this voltage is not a constant and it varies with time. This

variance in the voltage on the power and ground lines is referred to as Power

noise and Ground bounce respectively. This noise is collectively referred to as

Power noise. IR drop on the data path cells will impact setup-timing, while on

the clock cells, it may cause both setup and hold timing problems.

|

| Voltage Droop and Ground Bounce |

The robustness of power grid

needs to be tested thoroughly under various modes of operation. These two modes

are referred to as Static IR Drop and Dynamic IR Drop.

I.

Static

IR Drop

Static IR drop takes into account

the average current drawn from the power grid assuming average switching

conditions. This analysis is performed early in the design cycle when

simulation vectors are not quite available to the design teams. Instead, static

IR drop relies on average data switching to compute the average current drawn

from the power grid over 1 clock cycle.

Static IR drop can highlight power grid weakness in the design. Static IR drop violations spread all across the design point to the fact that the power grid needs to be re-designed to reduce the overall power grid resistance. There may be cases where static IR drop violations may be concentrated around the regions with inherent power grid weaknesses- like the regions with one-sided power delivery- around the floorplan boundary, around the macros, within the macro channels.

Power distribution network is

usually a mesh in top most metal layers with strategic drop downs to lower

metal layers which eventually feed the standard cells. Power is routed in top

metal layers to keep the resistance minimum which will also ensure uniform

power delivery to all parts of the chip.

If PDN is not design carefully, it

will result in creation of one-sided power delivery which will create areas of

high resistance.

Power grid strengthening can be

achieved by:

- Making the power grid denser by adding wider PG straps to improve the current conductivity.

- Incrementally inserting via or via ladders along the power grid to drop from a higher metal layer to lower metal layers.

Increasing the clock frequency (with

or without optimizing for higher frequency target) has a direct impact on

static IR drop, because it increases the average current drawn from the power

grid.

|

| Lowering the clock frequency decreases the average current, and hence also decreases static IR drop |

II.

Dynamic IR Drop

Dynamic IR drop, also known as

Instantaneous Voltage Drop (IVD), is the instantaneous drop in the voltage

rails because of high transient current drawn from the power grid. Dynamic IR

drop takes into account the instantaneous current drawn from the power grid in

a switching event. This analysis is usually performed towards the end of design

cycle when design team has the simulation vectors available from their

functional or test pattern simulations. This mode of analysis is most time

consuming, but nevertheless critical to ensure no surprises on silicon.

Dynamic IR drop is a function of:

- Power Distribution Network (PDN): Just like the static IR drop, weak PDN affects dynamic IR as well. A weaker power grid is not equipped to meet the peak current demand by switching standard cells and it exacerbates the dynamic IR drop.

- Simultaneous Switching: Higher simultaneous switching of standard cells tends to create local hotspots where peak current demand is higher, which causes voltage to drop in these hotspots.

Potential ways to mitigate

dynamic voltage drop are as follows:

- Augmenting the power grid to minimize PG resistance- Adding more power/ground straps facilitate better distribution of current to the standard cells, thereby reducing the susceptibility to dynamic IR drop.

- Cell Padding- Another effective way to reduce dynamic IR drop is to space apart cells which switch simultaneously to reduce the peak current demand from the power grid. This works especially well for clock cells which tend to display temporal switching and spatial locality.

|

| Cell Spacing to solve instantaneous voltage drop |

- Downsizing- Downsizing cells reduces the instantaneous current demand, with a possible downside on setup timing.

|

| Downsizing cells to solve instantaneous voltage drop |

- Splitting the output capacitance- The amount of current drawn from the power grid is directly proportional to output capacitance that’s being driven. Splitting the output capacitance can reduce the peak current demand, and also improve timing in most cases.

|

Split output capacitance to reduce peak current drawn

from the power grid

|

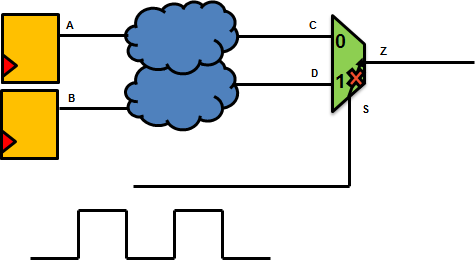

- Inserting decap cells- Decap cells are decoupling capacitors that tend to act as charge reservoirs that can supply current to the standard cells in event of high requirement, especially when there’s simultaneous switching of cells in a local region. However, just like any capacitor, decaps tend to be leaky and add to the leakage power dissipated in the design.

|

| Inserting decaps to minimize dynamic voltage drop |

With shrinking geometries,

designs are moving from gate-dominated designs to wire-dominated designs. Also,

the operating frequencies have been increasing. More signal wires mean lesser

routing resources for the power distribution network. Moreover, lower

technology nodes allow higher packing density of standard cells. Higher

frequencies cause higher switching resulting in higher voltage droop and higher

ground bounce.

Due diligence is necessary not

just to design the power grid but also to analyze and fix the dynamic IR drop

violations to avoid seeing any timing surprises on silicon.